1. 引言

机载LiDAR能快速获取大面积、高密度、高精度的三维点云数据,被广泛用于数字城市建立、数字地面模型获取、海岸线探测等领域;三维点云数据的解析及分类在场景理解和兴趣目标建模等方面具有重要的意义,因此点云分类成为了目前的一个研究热点 [1] [2]。

近年来,基于机器学习理论的分类方法得到了广泛的发展 [3]。目前,主要利用基于局部特征的机器学习分类器 [4] 来对点云进行分类,如支持向量机分类器 [5],随机森林分类器 [6] 等。由于机器学习特征提取过程中存在过分割或者欠分割 [7] 等问题,不能对点云进行精细分类。当物体边缘存在分类误差时,分类的误差会对模型参数造成极大的影响。此外有研究工作者将上下文相关的思想引入点云分类中,虽然取得了一定的进展 [4] [8],但是其计算效率及复杂度方面还存在一定的不足。

针对上述分类误差的情况,本文引入了图割的方法,结合初始点云分类的结果 [9] 对地物的整体特性进行提取,进而优化分类效果并提高分类精度。

2. 前背景图割及其用于点云分类

2.1. 图割原理

图割的方法最初设计用于解决能量最小化问题,此问题在机器视觉类别标定领域有较强的通用性。在类别标定过程中,同时考虑标签同对象的符合程度以及对象间的相互关系,可以将定义于马尔科夫随机场中的后验概率转换为能量模型,将标定问题转换为能量最小化问题 [10]。在能量模型中,对每一个位置(site)

设置一定的标签

,其中

为n个位置,

为一组k个标签。因此标定问题为建立P和L间的映射关系,通过此映射就可以确定所有位置的标签

。能量函数一般为非凸函数,具有许多局部最小值,因此其求解过程相当复杂,为NP难问题 [10]。一般能量函数定义为:

(1)

式中,

对相邻位置间的相似程度进行测量,

对标签f和观测数据间的符合程度进行测量,当数据与标签符合很好时,此能量值很小,反之,当数据与标签不一致时,能量值较大。

通常可以写为:

(2)

式中

表示单个位置p与其标签 值的符合程度。

值的符合程度。

的选取对于能量的优化效果具有很重要的作用。一般将

表示为:

(3)

式中,N为相互作用的成对位置集合,通常为相邻的位置对。不同数据位置对关系使用不同的方法进行表示。

为每一对位置

独立设置相应的惩罚值;惩罚值的设置在许多应用中具有极其重要的作用,其直接关系到优化结果的好坏。因此,

的选取对能量函数的求解具有很重要的作用。以下对图割的定义及求解方式进行阐述。

2.1.1. 图割定义

图

包括一组节点V以及一组连接,分为有向图和无向图两种。为了方便,本文使用无向图。在此图中,相邻的节点对表示为:

。相邻节点的边E的集合。图的普通节点在点云分割中,表示为三维点。同时图中还包括两种独特的节点:终端节点S和T,分别代表前景对象节点和背景节点。相邻普通节点间的边为n-连接,普通节点与终端节点间的边为t-连接。所有的边都有一定的权重

。

如图1所示,图割

为一组边的组合,C将图中不同的终端节点分割开,形成新的图

。

Figure 1. S-T diagram: S represents foreground object node, T represents background node. The thickness of the edge is related to its weight, and the dotted line is used to separate the foreground and background points

图1. S-T图割示意图:S表示为前景对象节点,T表示为背景节点。边的粗细与其权重值相关,虚线用来分割出前景点和背景点

定义为图割的代价(cost),由图割中所有边的权重之和表示。最小割问题即寻找具有最小代价的图割。此问题一般可以转换成寻找终端节点间的最大流问题,从而可以高效求解 [11]。

图割的代价与能量函数(公式(1))可以建立一定的关系,如可以将图割的代价表示为能量值与常数之和。因此可以通过最小化图割的代价,进而解求得到能量的最优值 [10]。

图G中所有不同类型的边的权重定义如下(见表1)。

Table 1. Weights of different sides in Figure 1

表1. 图1中不同边的权重

上表中,K的取值为:

(4)

通过求取上述定义图的最小可行图割C,就可以解得能量(公式(1))的最小值 [12]。

2.1.2. 点云分割中图定义

由上文所述,图由节点和边组成。点云中图的节点为激光点,图中的边由相邻激光点间的边表示。由于点云由散乱的激光点组成,其结构复杂,没有明显的邻接关系,因此在搜索相邻激光点时,本文引入了K邻近(KNN)算法。为了加速KNN搜索,本文同时使用R树 [13] 建立原始点云的索引。

图中相邻点间边的权重赋值的原则为:边的长度越短,其权重就应该越大,随着长度的增长,其权重也逐渐缩小。如果连接相邻点p和pq的i边的长度为

,其权重定义为:

(5)

式中,设定为点云数据的平均点间距。

同时在文中,还需要对点p到两种终端节点的t-连接权重进行定义。此权重同上述相邻边的权重相似,当p距离对象种子点较近时,其为前景点的可能性较大,反之,其可能为背景点。在计算过程中,需要选择距离p点最近的对象种子点以及背景种子点,进行权重计算。设定权重方程为:

(6)

(6)

式中,

为点p到最近背景种子节点的距离,

为点p到最近前景对象种子节点的距离。

2.1.3. 前背景种子点选取

两种终端节点的种子点选取是前背景图割的关键。种子点的选取越精确,后续的分割结构也越精确。高效率的自动种子点选取方法得到了较广泛的应用,但是自动选取过程中,种子点的提取精度同人工交互方式相比较差,有可能出现漏选或者错选的情况。

本文结合点云初始分类结果提出了一种高精度的前背景种子点自动选取方法,具体思想介绍如下。

利用局部分类器分类的点云具有较高的准确率;同时点云误分点分布呈现局部性以及数量少等特点 [9]。在种子的点选取过程中,基于初始分类结果需要提取出稳定可靠的正确分类物体,同时排除误分点。因此本文首先利用区域增长的方式对属于同类的邻近点进行聚类。

在区域增长聚类过程中,有三种关键的参数需要设置,类别,聚类距离,K近邻搜索。

1) 类别:聚类过程中,只对初始种子点类别相同的点进行聚类;

2) 聚类距离:由于不同类别点云在同一数据集中的点间距不同,例如地面和建筑物点的间距小于植被点的间距等。此参数需要采用训练数据进行训练得到;

3) 在K近邻搜索中,首先对数据集构建R-索引 [13],在构建索引的基础上,搜素选取种子的K个邻近点。

具体聚类过程为:1) 首先选取一未聚类的种子点,判读其类别;2) 依次将其K个邻近点中具有相同类别且距离在一定范围内的点归并到区域内;3) 依次对区域内未经增长的点的邻近点继续判断是否归并至区域内,直至没有可以归并的点。

依次对聚类后的点簇进行判别,具有较多点数的点簇,其分类结果为可靠的;反之,具有较少点的点簇为非可靠的,其内部点的类别可能具有较大的错误率。以点云数量为阈值,对聚类后的点簇进行可靠性判断。该阈值同物体的类别、体积以及点云的密度等因素都具有较大的关系。在计算过程中,相关参数设置复杂,不同场景中的物体大小也有所不同。因此本文基于训练样本对此不同类别地物的阈值进行选取。通过对此图代价最小的可行图割进行求取就可以最小化能量函数(公式(1)) [12],进而对场景中的前景物体进行分割提取。

2.2. 基于前背景图割的点云精细分类流程

在点云分类优化过程中,需要对整体场景中的点云误分情况进行修正。首先选取一个区域,依照此区域中点的类别以及点的数量判断此区域中物体是否可靠;然后依次对可靠物体及其周围邻域内的点构建图G进行前背景图割分割。由于背景种子的选取直接关系到对于分割结果的好坏,依据背景种子一定部位待分割物体上的点的选取准则,选取其周围内其他可靠物体上的点作为背景种子点,待提取可靠物体上的点则作为前景种子点。对于每一待提取可靠物体的分割包括以下方面:

1) 邻域系统:首先计算待提取物体分割区域外接最小立方体,然后将此立方体进行扩展,以包含其周围点;

2) 种子点选取:将待提取的可靠物体上的点设置为前景种子点,同时将邻域内的其他可靠物体上的点设置为背景种子点;

3) 前背景图割:对邻域系统内的所有点搜索其K近邻点,建立图的n-连接;同时对邻域系统内所有点搜索其最近的前背景种子点,建立图的t-连接。图的边的权重按照公式(5)和(6)进行计算。建立好图后,便可以采用前背景图割技术对前景对象进行提取。

图割分割完毕后,对分割到待提取物体上的所有点的类别进行优化,使被误分割到前景中的非前背景种子点的类别调整为前景物体的类别。

基于前背景图割的点云分类结果优化流程如图2所示。

Figure 2. Cut is used for precise classification of point clouds

图2. 图割用于点云精确分类

3. 试验和分析

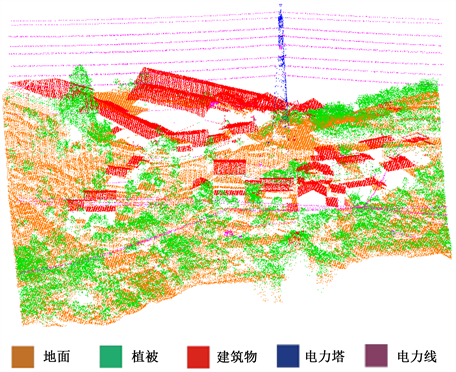

本文使用的试验数据为电力应用激光扫描数据,点云密度为60点/m2。为了验证算法的有效性,从原始数据中选取两个数据集合:训练集和测试集;训练集大小约为100万点,测试集大小约为500万点。目标类别分别为地面,植被,建筑物,电力塔,电力线。首先,从训练集中随机选取每类别中的4000个样本集用于对JointBoost分类器 [14] 进行训练;剩余的训练数据作为验证集对分类器的参数(弱分类器数量)及邻域大小进行分析及选取 [15]。训练好的分类器可以对测试集进行分类验证。实验环境采用Intel I7处理器,16 G内存的计算机。

在点云分类过程中我们首先采用JointBoost分类器对点云进行分类,然后采用图割的方法对分类结果进行改进。

3.1. 地物精确提取

除了一些众所周知的英文缩写,如IP、CPU、FDA,所有的英文缩写在文中第一次出现时都应该给出其全称。文章标题中尽量避免使用生僻的英文缩写。电力塔是电力场景中极其重要的一种地物。由于分布复杂,它的点云分类结果一般存在较为严重的误分情况,因此精确提取电力塔,获取其位置、姿态等关键信息对于保障电力正常的输送以及损坏后的重建显得尤为重要。

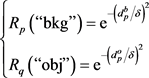

本文将电力塔作为主要研究对象,引入图割分类优化方法对电力塔进行精确提取。图3为电力塔分类及其周围区域点云前背景图割结果图。从图3(a)中可以看出,点云初始分类后存在一定的误分情况,主要集中在电力塔同其他类别物体(植被、电力线等)相交的区域以及点云密度稀疏甚至缺失的区域。在分割过程中,选择可靠的电力塔点为前景点,同时选择电力塔周围其他可靠的物体,如电力线,植被等作为背景点(图3(b))。图3(c)显示了前背景图割结果,可以看出分割后,电力塔整体得到了精确提取,同时也使得电力塔与周围物体的边界点被准确分类。

Figure 3. Pre-background cutting of the power tower and its surrounding points: (a) The initial classification results of point cloud obtained by using JointBoost and local features; (b) The foreground seed points are orange, the background seed points are green, and the black points are points on unreliable objects; (c) Segmentation results

图3. 对电力塔及其周围点进行前背景图割:(a) 使用JointBoost以及局部特征获得的点云初始分类结果;(b) 前景种子点为橘黄色,背景种子点为绿色,黑色点为非可靠物体上的点;(c) 分割结果

3.2. 点云分类结果优化

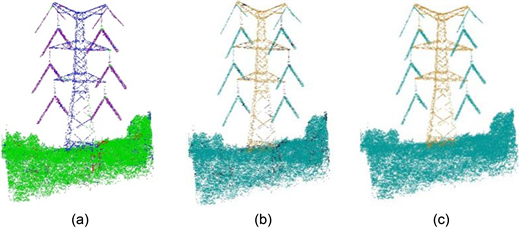

通过优化场景中所有物体的局部分类误差结果来对场景中的不同地物经行精确分类。而基于JointBoost的地面分类结果精度很高,在前背景图割过程中,为了节省计算时间,不对可靠地面物体进行分割。在分割过程中,依次对区域中可靠物体及其扩展邻域内点进行前背景图割,分割结果如图4所示。可以看出分割后,结果具有光滑性。

Figure 4. Segmentation results of objects of other categories except ground points in the first region. In the figure, different colors represent the foreground object points after segmentation, while black represents the background points

图4. 一区域中除地面点外其他类别物体分割结果。图中不同的彩色表示分割后的前景地物点,黑色表示背景点

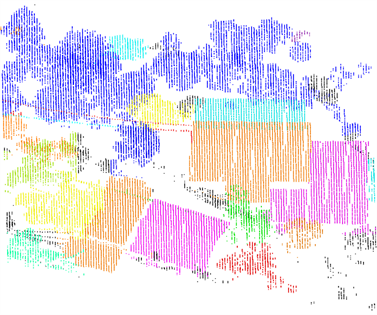

分割结束后,对非可靠物体中被误分割到前景对象中点的类别进行优化,重新标记其类别为前景对象(图5)。调整的后的前景对象,同初始分类结果相比,其局部分类误差得到了降低,分类精度得到了明显改善。

Figure 5. Extraction results of foreground object

图5. 前景对象提取结果

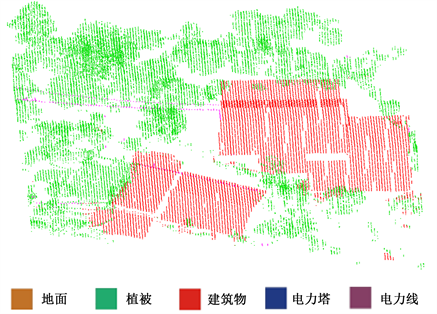

分类优化后,点云分类效果较初始分类结果有了一定的提升,整体准确率由93.8%提升到95.9%;同时,分类结果具有光滑的特性,物体内部具有较少的误差点,不同物体间边界清晰。同初始分类结果相比,优化后的结果在精度以及可视化方面都有了提升(图6)。

从以上的分类结果,可以看出利用图割的思想优化点云分类结果确实可行。从根本上来说,由于JointBoost在提取局部特征过程中会出现过分割或者欠分割的情况,导致分类结果存在局部误差,不能对点云进行精细分类。而能量函数的大小能够量化点云分类结果的好坏。当能量函数取得最小值时,即表示数据与分类的标签相符合。同时图割能够解决能量最小化问题。因此本文定义点云分类中的能量函数,将能量优化问题转换成图割问题,通过最小化图割的代价进而解求得到能量的最优值进而优化JointBoost分类器初始分类结果。图割分割完毕后,对分割到待提取物体上的所有点的类别进行优化,使被误分割到前景中的非前背景种子点的类别调整为前景物体的类别。实验结果也证明了分类结果更加光滑,分类精度大大提高。

Figure 6. Optimization results of point cloud classification

图6. 点云分类优化结果

4. 结论

本文主要对复杂场景中激光扫描数据的精确分类及地物提取进行了研究。基于局部特征的机器学习分类方法存在分类误差的情况,本文引入前背景图割的方法,对物体整体信息进行提取,进而改进了整体分类效果;重点对该方法中图的建立以及前背景种子点的选取等关键点进行了分析。

在图的建立过程中,引入了KNN邻域系统,将点云中邻近激光点构建成图的边,并且按照边的长度赋予其权重。由于误差点具有局部性的特征,本文采用区域增长的方式提取出可靠的物体,将待提取可靠物体上的点设置为前景种子点,其他可靠物体上的点则为背景种子点,通过求取最小图割解,将对象从背景中分割提取进而优化点云分类结果。

为了验证本文算法的有效性,首先通过实验分析了电力塔的提取情况,分割后的物体内部具有光滑性,同时与其他物体间具有明显的间隔。然后对大场景的点云分类优化效果进行分析,分类精度得到了一定程度的提升。在后续的实验完善过程中,还需要进一步对种子点进行精确选取,同时优化能量函数的定义以及求解精度效率等。

点云分类对于后续的地物目标建模及三维虚拟城市具有建立重大意义。而复杂场景的点云分类研究仍是一项有挑战的任务,未来还需在分类方法、提高分类精度及实时化等方面进行更加深入的研究。