1. 引言

随着数据科学和计算机硬件能力的发展,人们处理大数据量的高维张量成为可能。张量是多维数据建模的强大工具,如彩色图像、视频、高光谱图像等。以图像和视频数据为代表的高阶张量数据在传输过程中,数据会不可避免地受到各种噪声环境的影响,例如:椒盐噪声、高斯噪声、泊松噪声等,从被污染的图像视频中恢复干净数据成为图像视频处理的一个基本和必要的步骤。通常的恢复处理过程利用了数据固有的先验特征,如低秩性、稀疏性和局部相似性 [1] [2] [3]。其中基于低秩的方法,例如低秩矩阵逼近(LRMA)和低秩张量逼近(LRTA) [4] [5],近年已经得到广泛的研究。

主成分分析(PCA)方法是处理恢复问题的一种基本的低秩矩阵逼近方法,为了提高PCA模型的鲁棒性,Candes提出了鲁棒主成分分析(RPCA)方法 [6]。然而,低秩矩阵逼近的主要问题是仅限于处理矩阵数据,无法恢复或者要经过特殊处理才能恢复张量数据。将基于矩阵的方法拓展为3阶的张量情况需要能够处理张量的基本数学工具,这里与矩阵的情况不同,张量秩的定义到目前为止不是唯一的。至今,研究人员已经给出了多种张量秩定义以及对应的凸松弛。其中,CP秩 [7] 依赖于CP分解,它的值是张量分解后秩一张量的具体数目。然而,CP秩的精确计算已被证明是NPhard。Tucker秩 [8] 受到更广泛的应用,并且Liu等提出了SNN方法作为Tucker秩的凸替代,但是SNN仍然不是Tucker秩的紧凸替代。除此之外,还有其他的张量秩定义,比如张量环秩、张量链秩等,可以参考文献 [9] [10] [11]。特别的,Kilmer等 [12] 将张量作为一个整体来研究张量的奇异值分解(t-SVD)。在此基础上,Lu等 [13] 提出了张量主成分分析(TRPCA)方法使得我们可以在特定的条件下从观察到的三阶张量

中恢复低秩部分

和稀疏部分

。TRPCA和这一类的基于核范数的低秩张量逼近方法 [14] [15] [16] 能在保持数据结构完整的前提下有效地解决实际问题。

虽然这些方法普遍取得了良好的成果,但仍有两个主要挑战:1) 一些现有的低秩逼近方法可能需要预先定义秩的值;2) 这些方法主要处理矩阵和3阶张量数据,虽然一些低秩张量逼近方法可以通过重塑数据来处理高阶数据,但是这种处理会破坏数据的全局空间结构。鉴于上述缺点,本文利用 [17] 中提出的启发式的处理高阶张量的循环思想,设计了高阶张量的代数框架和相应的高阶张量SVD分解方法。同时,定义了一个新的张量正向秩以及高阶张量核范数。最后基于上述要素,本文设计出全新的低秩高阶张量逼近方法(LRHA),它能够从高阶张量数据(阶数

)中恢复出潜在的低秩部分。具体表达形式为:

,其中

是目标低秩张量,

为稀疏误差项,

为高斯噪声项。

本工作的贡献总结如下:1) 推导出高阶张量的共轭性质,并将其应用于张量积和张量奇异值分解运算,这种改进相对于不加共轭的原方法将节省一定计算成本;2) 利用提出的基于张量正向秩的张量核范数,成功地完善了LRHA所需的代数框架并给出了完整算法;3) 通过对多项图像视频的恢复任务验证了LRHA方法的恢复能力,理论上可以从任意维数的观测数据中恢复低正向秩部分。

2. 符号和数学框架

2.1. 符号

本节介绍了文中使用的一些符号。实数域和复数域分别记为

和

。本文用黑体欧拉字母表示张量,上标表示张量的相应维数,例如一个p阶张量可以记为

,矩阵用黑体大写字母表示,例如

,向量以黑体小写字母表示,例如

,用小写字母表示标量,例如a。已知一个阶3张量

可以看成是矩阵的组合,称之为切片。对于任意p阶张量

也可以看成是低维张量的组合,本文称之为子张量,它通过固定原始张量的部分维数而得到。由于本文对高阶张量的处理一般从最后一个维度逆向一直到倒数第二个维度为止,将

简写为

,

。特别的,

的正向切片定义为

,它是一个二阶子张量并且可以被记为

。并且本文称大小为

的子张量

为管子张量。对于任意

,它的复共轭定义为

,

即取

每一个元素的复共轭。本文定义p阶张量的

-范数为

,无穷范数定义为

。

2.2. DFT共轭性质

离散傅里叶变换(DFT)在本文算法运算时起着核心作用。已知对

的傅里叶变换后结果为

,记为

,其中

是DFT矩阵,已知

的循环矩阵可以用DFT矩阵对角化,

其中

表示一个对角矩阵,其第i个对角项为

。

引理2.1 对于

,张量

,

,i从3到p。

是一个块对角矩阵,它的第

对角块

是

的第

正向切片,我们有,

(2.1)

;

;

;

;

。

上述引理的证明使用到了块循环矩阵

可通过傅里叶变换对角化的性质,即

对于

的任意元素,我们都能根据(2.1)的索引在

的某一个相应的正向切片中找到它的共轭。令

且

,上面的等式可以简记

。其中

且

。

2.3. 代数框架

对于

,本文定义

,

,

算子将

按照第p维度展开为

个大小为

的张量且

是它的逆算子。

,

,

,

其中

是

。

算子将

展开为它的块循环矩阵

;

算子将

展开为大小为

的矩阵且

是它的逆算子

。

定义2.1 令

。那么张量积

,

是大小为

的p阶张量,

引理2.1提供了一种更有效的方法来计算高阶张量积。例如,

和

是两个5阶张量。如果想要得到它们之间的乘积,按标准方法需要125次矩阵乘积。但如果使用共轭性质,我们只需要计算63次乘积。

定义2.2 令

,它的共轭转置为

,即

。

定义2.3 令

,当它的第一个正向切片为大小为

的单位矩阵其他切片全为0时称它为单位张量。对于合适大小的

有

。

定义2.4 令

,若

,便称这个实值张量是一个p阶正交张量。

定义2.5 如果张量的每一个正向切片都是对角矩阵,则该张量称为f-对角张量。

定理2.6 令

是一个p阶实值张量。那么

能被分解为

其中

,

是正交张量,且

是一个f-对角张量。

已知矩阵的奇异值具有递减性质,在t-SVD的处理过程中,令

,

的第一个正向切片对角线上元素

具有相同的递减性质:

,

,

。可以通过DFT的处理得到以下性质,

(2.2)

定义2.7对于

,张量正向秩(或张量1,2维秩)为

,张量正向秩的值为

的非零管子张量的个数,它们是通过对

进行t-SVD得到的,即

(2.3)

实际上张量正向秩等于

非零项的个数。显然,我们可以通过旋转张量即改变张量模式(mode)的排列顺序得到类似的秩。例如,p阶张量

的1,4维秩(

)是通过固定一维和四维,然后进行t-SVD得到的。从图1可以看出,无论t-SVD是基于一个相对较低的正向秩还是基于1,4维秩,都可以在很小的相应秩的情况下很好地逼近原始视频,这证明了图像视频数据通常有一定的低秩性,从而保证低秩高阶张量逼近的合理性。

(a) 原始视频的特定帧

(a) 原始视频的特定帧  (b) 30正向秩PSNR = 36.1

(b) 30正向秩PSNR = 36.1  (c) 10 1,4维秩PSNR = 45.7

(c) 10 1,4维秩PSNR = 45.7

Figure 1. Color video can be approximated by a low-rank tensor

图1. 彩色视频可以用低秩张量近似

定义2.8 令

作为

的t-SVD结果。

的张量核范数定义为

(2.4)

其中

。

3. 低秩高阶张量逼近(LRHA)算法

本文最初的问题是从高度污染的测量值

中恢复所需要的低秩张量

,

为稀疏误差项而

为高斯噪声项。这一节将展示了求解该问题算法的详细过程,为了公式简洁,在本节中略去张量右上角的标识。本文采用标准的交替方向乘子法(ADMM)来解决目标问题,首先将原问题具体表示为:

(3.1)

其中

是事先预定好的平衡参数。

是潜在的低秩张量,

是稀疏误差,

是高斯噪声项。下面给出原问题的增广拉格朗日函数:

(3.2)

其中

是拉格朗日乘子,

是正惩罚参数。总的ADMM算法见算法1。

3.1. 更新

通过固定其他变量,更新

的子问题可以写作:

(3.3)

其中

。在三阶张量的情形下,可以将问题转化到矩阵奇异值阈值(SVT)解决。类似的,本文引入近端算子,从而使得上述问题等价于下式:

(3.4)

根据高阶张量的分解过程,可以根据文献 [13] 得到SVT算子作为上式的封闭解。令

,对于任意

,t-SVT算子

,其中

,i从p到3。

3.2. 更新

和

通过固定其他变量,更新

的子问题可以写作:

(3.5)

其中

。上式(3.5)的封闭解可以通过软阈值算子得到 [18],即

其中

。类似的,更新

的子问题可以写作:

(3.6)

其中

。(3.6)的封闭解为:

4. 实验

在这一章中,本文应用LRHA进行多种视频恢复任务来验证该方法恢复高阶张量数据的能力,包括视频补全、视频去噪以及视频背景建模。为了实验的一致性,本文在所有实验中设置

,

。在实际应用中,对于不同的数据可以通过更仔细地调出不同的最优参数进一步提高性能。

4.1. 视频补全

在本节中,彩色视频被用来从部分观测项中进行低秩张量补全来评估本文的方法的实用性。张量补全相当于对于只受到椒盐噪声的张量进行恢复处理。本文使用yuv彩色视频数据1进行测试。对于实验中的每个视频序列,为了控制计算成本而使用前150帧进行测试,格式为网站提供的QCIF格式,其中每一帧大小为144 × 176 × 3。在缺失值的设置上,对于一个大小为的彩色视频张量,随机设置

个元素来观察,在本实验中,考虑

,

和

。同时本文应用HaLRTC [8],TMac [19],NRTAC [20],TNN [13] 这四种先进的张量修补方法进行视频恢复,并比较它们的性能。在实验中,这些比较方法均使用了参考文献中建议的参数设置,其中TNN是将数据的前两个维度直接拼接,再进行3阶的张量补全。实验中用于判断视频补全质量标准的PSNR值计算公式为:

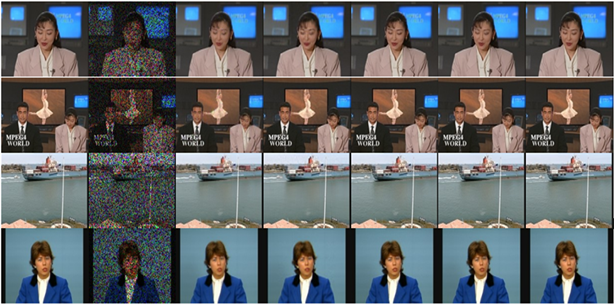

越高的PSNR值证明恢复的效果越好。实验结果见表1,部分结果见图2,图2中第一列为各个视频的样帧,第二列为该样帧特定采样率下的观测(比例为

),后列分别为HaLRTC,TMac,NRTAC,TNN和本文的方法恢复的结果。显然,无论是PSNR值还是直观的恢复效果,都是本文的方法最优的,证明了LRHA方法在张量补全上的适用性。

4.2. 视频去噪

本节应用LRHA对随机噪声干扰下的彩色视频图像进行恢复。考虑计算成本,每一个视频都被预先设置为大小为144 × 176 × 3 × 100的4阶张量。对于每一个视频,本文添加了

的脉冲噪声以及

的高斯噪声,同时本文应用基于SNN [21],TNN的两种高阶张量去噪方法进行比较,并且添加了LRHA的DCT变体(即傅里叶变换时的DFT矩阵替换为DCT矩阵)来验证共轭方法是否能节省计算成本。在实验中,这些比较方法均使用了参考文献中建议的参数设置,其中基于TNN的方法TRPCA仍然是将数据的前两个维度直接拼接,再进行3阶的去噪。

Figure 2. Comparison of video completion performance

图2. 视频补全表现比较

Table 1. PSNR values of different tensor completion methods

表1. 不同张量补全方法的PSNR指标

图3是视频去噪结果,第一列为视频样帧,第二列该样帧加噪后的观测,后列分别为SNN,TRPCA,本文的方法以及它的DCT变体恢复的结果。从实验结果表2和图3可以看出以下几点:首先基于Tucker秩的SNN方法在时间以及PSNR指标上都要次于基于管秩的TNN方法,其原因主要是因为SNN并不是目标的凸包络,以及Tucker方法普遍耗时;本文的方法与TRPCA相比,时间上要稍微长一些,原因是高阶张量的t-SVD需要更多的傅里叶变换过程,但由于LRHA相较而言能够充分利用空间结构信息,恢复效果普遍优于TRPCA;使用共轭方法的LRHADFT在恢复效果上接近于DCT变体,但在时间指标上具有明显的提升。

Figure 3. Comparison of video denoising performance

图3. 视频去噪表现比较

Table 2. PSNR comparison of video denoising

表2. 视频去噪PSNR比较

4.3. 视频背景建模

本节应用LRHA对随机噪声干扰下的彩色视频图像进行背景建模处理。本节数据为I2R数据2,为了得到较好的背景建模效果,每一个视频都被预先设置为大小为h × w × 3 × 300的4阶张量,并添加了

的脉冲噪声以及

的高斯噪声,由于处理前的前景已经受到噪声污染,后文的前景图像是通过恢复的背景图像与干净图像直接相减得到的。图4展示了LRHA方法对不同视频进行背景建模的结果,每一列从上到下依次为:原始图像,噪声图像,前景,背景。可以看出该方法能够在复杂噪声情况下能够去除噪声以及运动中的人物,从而较好地完成背景建模任务。

5. 总结

本文提出了一种新的低秩高阶张量逼近方法,利用高阶张量代数框架将原本只能作用于2阶或者3阶数据的低秩逼近方法拓展到任意维度,并设计了完整的算法。该方法与传统低维方法的优势在于能够利用张量整体结构信息。张量补全、张量去噪、张量背景建模实验证明了本文的方法可以在采用适当参数的前提下,有效地对以彩色视频为代表的高阶张量进行恢复处理,为对应现实问题提供了一种实用的解决方案。

致谢

感谢导师的精心指导以及各位同门在百忙之中提供的无私帮助,感谢测试数据所有者的无私奉献。在论文的写作过程中引用了学界大量的研究成果,在此谨向这些成果的作者表示衷心的感谢。

NOTES

1http://trace.eas.asu.edu/yuv/.

2http://perception.i2r.astar.edu.sg/bkmodel/bkindex.html.